What is CrUX

The Chrome UX Report (AKA "CrUX") is a dataset of real user experiences measured by Chrome. Google releases monthly snapshots of over 4 million origins onto BigQuery with stats on web performance metrics like First Contentful Paint (FCP), DOM Content Loaded (DCL), and First Input Delay (FID).

PageSpeed Insights is another tool that integrates with CrUX to provide easy access to origin performance as well as page-specific performance data, in addition to prescriptive information about how to improve the performance of the page.

The CrUX datasets have been around and growing since November 2017, so we can even see historical performance data.

In this post I walk you through the practical steps of how to use it to get insights into your site's performance and how it stacks up against the competition.

How to use it

Writing a few lines of SQL on BigQuery, we can start extracting insights about UX on the web.

SELECT

SUM(fcp.density) AS fast_fcp

FROM

`chrome-ux-report.all.201808`,

UNNEST(first_contentful_paint.histogram.bin) AS fcp

WHERE

fcp.start < 1000 AND

origin = 'https://dev.to'

The raw data is organized like a histogram, with bins having a start time, end time, and density value. For example, we can query for the percent of "fast" FCP experiences, where "fast" is defined as happening in under a second. The results tell us that during August 2018, users on dev.to experienced a fast FCP about 59% of the time.

Let's say we wanted to compare that with a hypothetical competitor, example.com. Here's how that query would look:

SELECT

origin,

SUM(fcp.density) AS fast_fcp

FROM

`chrome-ux-report.all.201808`,

UNNEST(first_contentful_paint.histogram.bin) AS fcp

WHERE

fcp.start < 1000 AND

origin IN ('https://dev.to', 'https://example.com')

GROUP BY

origin

Not much different, we just add the other origin and group it so we end up with a fast FCP density for each. It turns out that dev.to has a higher density of fast experiences than example.com, whose density is about 43%.

Now let's say we wanted to measure this change over time. Because the tables are all dated as YYYYMM, we can use a wildcard to capture all of them and group them:

SELECT

_TABLE_SUFFIX AS month,

origin,

SUM(fcp.density) AS fast_fcp

FROM

`chrome-ux-report.all.*`,

UNNEST(first_contentful_paint.histogram.bin) AS fcp

WHERE

fcp.start < 1000 AND

origin IN ('https://dev.to', 'https://example.com')

GROUP BY

month,

origin

ORDER BY

month,

origin

By plotting the results in a chart (BigQuery can export to a Google Sheet for quick analysis), we can see that dev.to performance has been consistently good, but example.com has had a big fluctuation recently that brought it below its usual density of ~80%.

Ok but how about a solution without SQL?

I hear you. BigQuery is extremely powerful for writing custom queries to slice the data however you need, but using it for the first time can require some configuration and if you query more than 1 TB in a month you may need to pay for the overages. It also requires some experience with SQL, and without base queries to use as a starting point it's easy to get lost.

There are a few tools that can help. The first is the CrUX Dashboard. You can build your own dashboard by visiting g.co/chromeuxdash.

This will generate a dashboard for you including the FCP distribution over time. No SQL required!

The dashboard also has a hidden super power. Because the data in CrUX is sliced by dimensions like the users' device and connection speed, we can even get aggregate data about the users themselves:

This chart shows that users on dev.to are mostly on their phone and just about never on a tablet.

The connection chart shows a 90/10 split between 4G and 3G connection speeds. These classifications are the effective connection type. Meaning that a user on a 4G network experiencing speeds closer to 2G would be classified as 2G. A desktop user on fast WiFi would be classified as 4G.

The other cool thing about this dashboard is that it's customized just for you. You can modify it however you want, for example you can add the corresponding chart for example.com to compare stats side-by-side.

The dashboard is built with Data Studio, which also has convenient sharing capabilities like access control and embeddability.

What's the absolute easiest way to get CrUX data?

Using the PageSpeed Insights web UI, you can simply enter a URL or origin and immediately get a chart. For example, using the origin: prefix we can get the origin-wide FCP and DCL stats for dev.to:

One important distinction to the PSI data is that it's updated daily using a rolling 30 day aggregation window, as opposed to the calendar month releases on BigQuery. This is nice because it enables you to get the latest data. Another important distinction is the availability of page-specific performance data:

This link provides CrUX data for @ben's popular open source announcement. Keep in mind that not all pages (or even origins) are included in the CrUX dataset. If you see the "Unavailable" response then the page may not have sufficient data, as in this case with the AMA topic page:

Also keep in mind that a page that has data for the current 30 day window may not have sufficient data in a future window. So that link to the PSI data for @ben's post may have Unavailable speed data in a few months, depending on the page's popularity. The exact threshold is kept secret to avoid saying too much about the unpopularity of pages outside of the CrUX report.

How do I get page-level data over time?

It's tricky because while PSI provides page-level data, it only gives you the latest 30-day snapshot, not a historical view like on BigQuery. But we can make that work!

PSI has an API. We can write a crafty little script that pings that API every day to extract the performance data.

- To get started, create a new Google Sheet

- Go into the "Tools" menu and select "Script Editor"

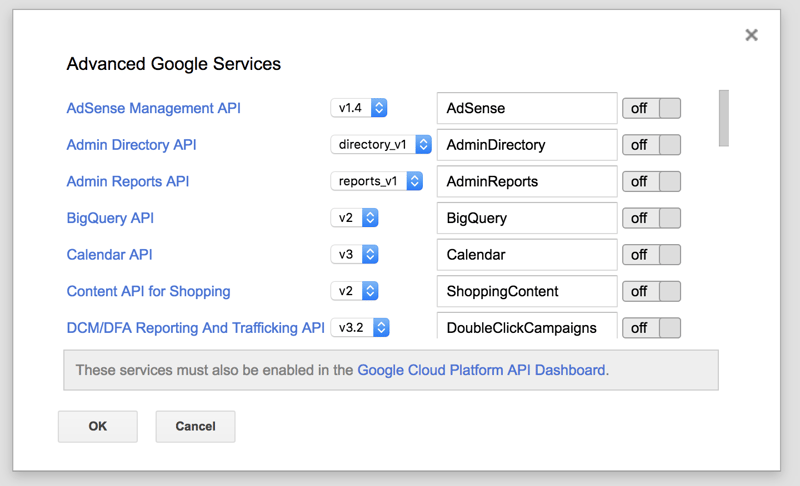

- From the Apps Script editor give the project a name like "PSI Monitoring", go into the "Resources" menu, select "Advanced Google Services", and click the link to enable services in "Google Cloud Platform API Dashboard"

- From the Google Cloud Platform console, search for the PageSpeed Insights API, enable it, and click "Create Credentials" to generate an API key

- From the Apps Script editor, go to "File", "Project properties" and create a new "Script property" named "PSI_API_KEY" and paste in your new API key

Now you're ready for the script. In Code.gs, paste this script:

// Created by Rick Viscomi (@rick_viscomi)

// Adapted from https://ithoughthecamewithyou.com/post/automate-google-pagespeed-insights-with-apps-script by Robert Ellison

var scriptProperties = PropertiesService.getScriptProperties();

var pageSpeedApiKey = scriptProperties.getProperty('PSI_API_KEY');

var pageSpeedMonitorUrls = [

'origin:https://developers.google.com',

'origin:https://developer.mozilla.org'

];

function monitor() {

for (var i = 0; i < pageSpeedMonitorUrls.length; i++) {

var url = pageSpeedMonitorUrls[i];

var desktop = callPageSpeed(url, 'desktop');

var mobile = callPageSpeed(url, 'mobile');

addRow(url, desktop, mobile);

}

}

function callPageSpeed(url, strategy) {

var pageSpeedUrl = 'https://www.googleapis.com/pagespeedonline/v4/runPagespeed?url=' + url + '&fields=loadingExperience&key=' + pageSpeedApiKey + '&strategy=' + strategy;

var response = UrlFetchApp.fetch(pageSpeedUrl);

var json = response.getContentText();

return JSON.parse(json);

}

function addRow(url, desktop, mobile) {

var spreadsheet = SpreadsheetApp.getActiveSpreadsheet();

var sheet = spreadsheet.getSheetByName('Sheet1');

sheet.appendRow([

Utilities.formatDate(new Date(), 'GMT', 'yyyy-MM-dd'),

url,

getFastFCP(desktop),

getFastFCP(mobile)

]);

}

function getFastFCP(data) {

return data.loadingExperience.metrics.FIRST_CONTENTFUL_PAINT_MS.distributions[0].proportion;

}

The script reads the API key that you assigned to the script properties and comes with two origins by default. If you want to change this to URL endpoints, simply omit the origin: prefix to get the specific pages.

The script will run each URL through the PSI API for both desktop and mobile and extract the proportion of fast FCP into the sheet (named "Sheet1", so keep any renames in sync).

You can test it out by opening the "Select function" menu, selecting "monitor", and clicking the triangular Run button. For your first run, you'll need to authorize the script to run the API. If all goes well, you can open up your sheet to see the results.

I've added a header row and formatted the columns (A is a date type, C and D are percentages) for easier reading. From there you can do all of the powerful things Sheets can do, like set up a pivot table or visualize the results.

But what about monitoring?

Luckily, with Apps Script you don't need to run this manually every day. You can set "triggers" to run the monitor function daily, which will append a new row for each URL every day. To set that up, go to the "Edit" menu, select "Current project's triggers" and add a new trigger with the following config:

- run the

monitorfunction - "Time-driven"

- "Day timer"

- select any hour for the script to run, or leave it on the default "Midnight to 1am"

After saving the trigger, you should be able to return to this sheet on a daily basis to see the latest performance stats for all of the monitored URLs or origins.

Just give me something I can clone!

Here's the sheet I made. Go to "File > Make a copy..." to clone it. Everything is now set up for you except for the API key property and the daily trigger. Follow the steps above to set those up. You can also clear the old data and overwrite the sample URLs to customize the analysis. Voila!

Wrapping up

This post explored four different ways to get real user insights out of the Chrome UX Report:

- BigQuery

- CrUX Dashboard

- PageSpeed Insights

- PageSpeed Insights API

These tools enable developers of all levels of expertise to make use of the dataset. In the future, I hope to see even more ambitious use of the data. For example, what if the tool you use to monitor your site's real user performance could also show you how it compares to your competition? Third party integrations like that could unlock some very powerful use cases to better understand the user experience.

I've also written about how we can combine this with other datasets to better understand the state of the web as a whole. For example, I joined CrUX with HTTP Archive data to analyze the real user performance of the top CMSs.

The dataset isn't even a year old yet and there's so much potential for it to be a real driver for change for a better user experience!

If you want to learn more about CrUX you should read the official developer documentation or watch the State of the Web video I made with more info about how it works. There's also the @ChromeUXReport account that I run if you have any questions.

Top comments (35)

How to have the customized crUX dashboard, clicked the chrome ux dashboard, there I am able to see only the report FCP chart only .

I am seeing only one option to edit the origin name only, not able to customize the metrics and dimensions to match my needs

as you shown, how to compare two websites and how to see the users distribution of devices

Great question. You're right, it's not obvious. I will write up a short guide on how to make a competitive dashboard.

After playing with it, found out how to see the device distribution and connection type...still not able to compare with competitions....one doubt any idea why unnesting the perf data (fcp) in from clause of sql...

You can use this dashboard with competitors!

datastudio.google.com/u/0/reportin...

Thank you so much Hulya ...I used your report. It is working good.

Hi !

I saw that the FID metrics hab been recently added to the PSI reports.

How can we access this new metric from the API ?

I try to adapt the script in the google sheet exemple you gave tu us Rick but i need some help..

Thanks

Hey! FID data is only available in v5 of the PageSpeed Insights API, and we have v4 hard-coded in the Apps Script.

Here's an example of a v5 API query with FID in the results: developers.google.com/apis-explore...

To get FID from the v5 API results, we'd also need a new function to extract it from the JSON data:

Thanks Rick !

It's Ok and i have my FID metrics :-)

Other question : I was monitoring the median FCP but, in the V5 API, the median is no longer in the json response...

Do you know why ?

If i want to continue with this metric and keep history of it : i have to stay on the V4 version ?

You mention that people should plug their URL into PageSpeed Insights to see if CrUX data is available for the URL, but it's cumbersome to come up with a list of URLs and test each one. Is there a way to get a list of URLs that have data in CrUX for a given domain?

To check if a single origin or URL is in CrUX, the PSI web UI is the quickest way.

If you have a batch of known URLs you can use a script similar to the PSI Monitoring code to test them all against the PSI API.

If you have a batch of known origins, you could write a query against the BigQuery dataset similar to

SELECT DISTINCT origin FROM chrome-ux-report... WHERE origin IN (a,b,c).To get all unknown URLs for a given origin, there is no equivalent queryable dataset. The only scenario I can imagine this info being provided is if the requester can verify permission from the webmaster.

This was super insightful, thanks a lot for putting this all up here, Rick.

P.S. Long time no see. 🙌

Thanks Ahmad!

One thing folks, make sure all the URLs are in lowercase — since DomainName like CamelCase in my case 🤣 didn't work on page insights.

Yes and generally I'd recommend people plug their URLs into PageSpeed Insights as a first step to 1) see if it's supported by CrUX ("Unavailable" or not), and 2) resolve it to its canonical URL. Then you can take that and plug it into BigQuery, Data Studio, or the PSI API with confidence.

Makes sense. Thanks for explaining. ✅

Hey Rick,

I've adapted the Google Sheets + App Script + daily monitor and all is well, except: I keep running into the issue with getting Quota exceeded errors. Don't understand this at all, as the quota are way higher than what I need, e.g. 100 request per 100 seconds. My script does a request to the API once a day, for 5 URLs. The Google Cloud Console shows I've done 11 requests today and yet the Quota exceeded error is returned.

Any tips for getting this solved?

As it is now, I can't rely on the data getting pulled in.

Hi Aaron. Can you add me as an editor of your script/sheet so I can debug?

Hi Rick, sent DM via Twitter ... and you took a look, thanks so much !!

8/6/2019 the PSI code I got from this article started throwing this error: TypeError: Cannot read property "metrics" from undefined. (line 40, file "Code"). Anyone else?

Could it be related to the new chrominium stuff that's happening to Google tools? webmasters.googleblog.com/2019/08/...

Hi Rick,

I am relatively new to Java but is it possible to get the rating (Fast,Average or Slow) as well as the median FCP and DCL for each of the origins? Like the one that is showing on the PSI web UI.

Thanks!

This has been awesome for me, but on 19/02/07 it stopped working on a few of the competitors I was tracking.

I get the following error: TypeError: Cannot read property "metrics" from undefined. (line 46, file "Code")

For 5 of 12 to stop working overnight that can't be a coincidence, any ideas?

It's stopped on one of my sites as well so that might help diagnose the issue....

Howdy Rick,

When adding the PSI_API_KEY to script properties I can not fill in the API KEY, there is no field available to enter the KEY. Am I missing a step? Or I authorised the PSI API in the GCP.

Thanks in advance.

Hi Rick, Thanks for the useful presentation on Core Web Vitals, etc. at GDGNYC yesterday.

I copied the Apps Script sheet that you referred to above and also in the presentation.

I needed to change one line:

var pageSpeedUrl = 'googleapis.com/pagespeedonline/v5/...' + url + '&fields=loadingExperience&key=' + pageSpeedApiKey + '&strategy=' + strategy;

I changed from v4 to v5

I'm not sure if others also would be affected so I post this in case it will help others.

Ralph

Hi Ralph, glad you found it useful!

This is an old blog post for using the PSI API. If you want to use the CrUX API, I'd recommend taking a look at this Apps Script file instead: github.com/GoogleChrome/CrUX/blob/...

Some comments may only be visible to logged-in visitors. Sign in to view all comments.