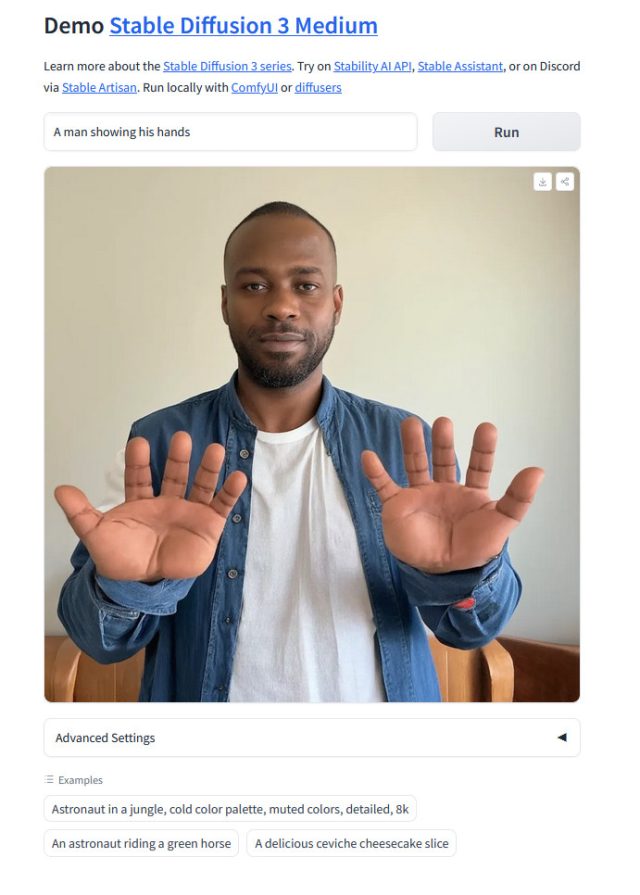

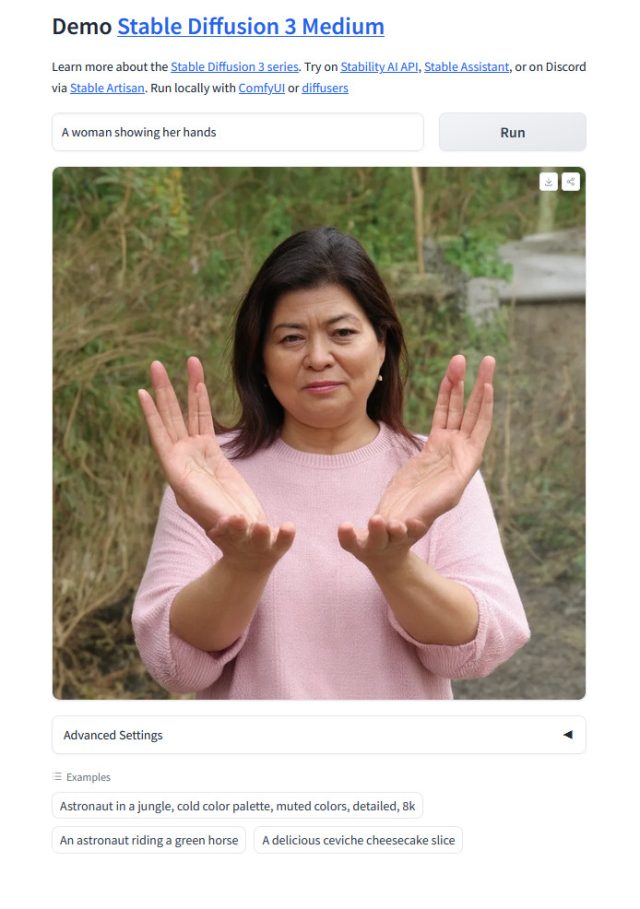

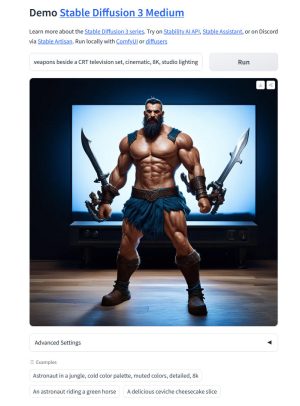

On Wednesday, Stability AI released weights for Stable Diffusion 3 Medium, an AI image-synthesis model that turns text prompts into AI-generated images. Its arrival has been ridiculed online, however, because it generates images of humans in a way that seems like a step backward from other state-of-the-art image-synthesis models like Midjourney or DALL-E 3. As a result, it can churn out wild anatomically incorrect visual abominations with ease.

A thread on Reddit, titled, "Is this release supposed to be a joke? [SD3-2B]," details the spectacular failures of SD3 Medium at rendering humans, especially human limbs like hands and feet. Another thread, titled, "Why is SD3 so bad at generating girls lying on the grass?" shows similar issues, but for entire human bodies.

Hands have traditionally been a challenge for AI image generators due to lack of good examples in early training data sets, but more recently, several image-synthesis models seemed to have overcome the issue. In that sense, SD3 appears to be a huge step backward for the image-synthesis enthusiasts that gather on Reddit—especially compared to recent Stability releases like SD XL Turbo in November.

"It wasn't too long ago that StableDiffusion was competing with Midjourney, now it just looks like a joke in comparison. At least our datasets are safe and ethical!" wrote one Reddit user.

AI image fans are so far blaming the Stable Diffusion 3's anatomy failures on Stability's insistence on filtering out adult content (often called "NSFW" content) from the SD3 training data that teaches the model how to generate images. "Believe it or not, heavily censoring a model also gets rid of human anatomy, so... that's what happened," wrote one Reddit user in the thread.

Basically, any time a user prompt homes in on a concept that isn't represented well in the AI model's training dataset, the image-synthesis model will confabulate its best interpretation of what the user is asking for. And sometimes that can be completely terrifying.

The release of Stable Diffusion 2.0 in 2022 suffered from similar problems in depicting humans well, and AI researchers soon discovered that censoring adult content that contains nudity could severely hamper an AI model's ability to generate accurate human anatomy. At the time, Stability AI reversed course with SD 2.1 and SD XL, regaining some abilities lost by strongly filtering NSFW content.

Loading comments...

Loading comments...

Who would have ever guessed?

Just goes to show that having a very broad, varied, and well tagged dataset is essential for a model to give good results. Even if a large chunk of that dataset is of various Pokemon going at it all hot and heavy, lol.