On Sunday, Nvidia CEO Jensen Huang reached beyond Blackwell and revealed the company's next-generation AI-accelerating GPU platform during his keynote at Computex 2024 in Taiwan. Huang also detailed plans for an annual tick-tock-style upgrade cycle of its AI acceleration platforms, mentioning an upcoming Blackwell Ultra chip slated for 2025 and a subsequent platform called "Rubin" set for 2026.

Nvidia's data center GPUs currently power a large majority of cloud-based AI models, such as ChatGPT, in both development (training) and deployment (inference) phases, and investors are keeping a close watch on the company, with expectations to keep that run going.

During the keynote, Huang seemed somewhat hesitant to make the Rubin announcement, perhaps wary of invoking the so-called Osborne effect, whereby a company's premature announcement of the next iteration of a tech product eats into the current iteration's sales. "This is the very first time that this next click as been made," Huang said, holding up his presentation remote just before the Rubin announcement. "And I'm not sure yet whether I'm going to regret this or not."

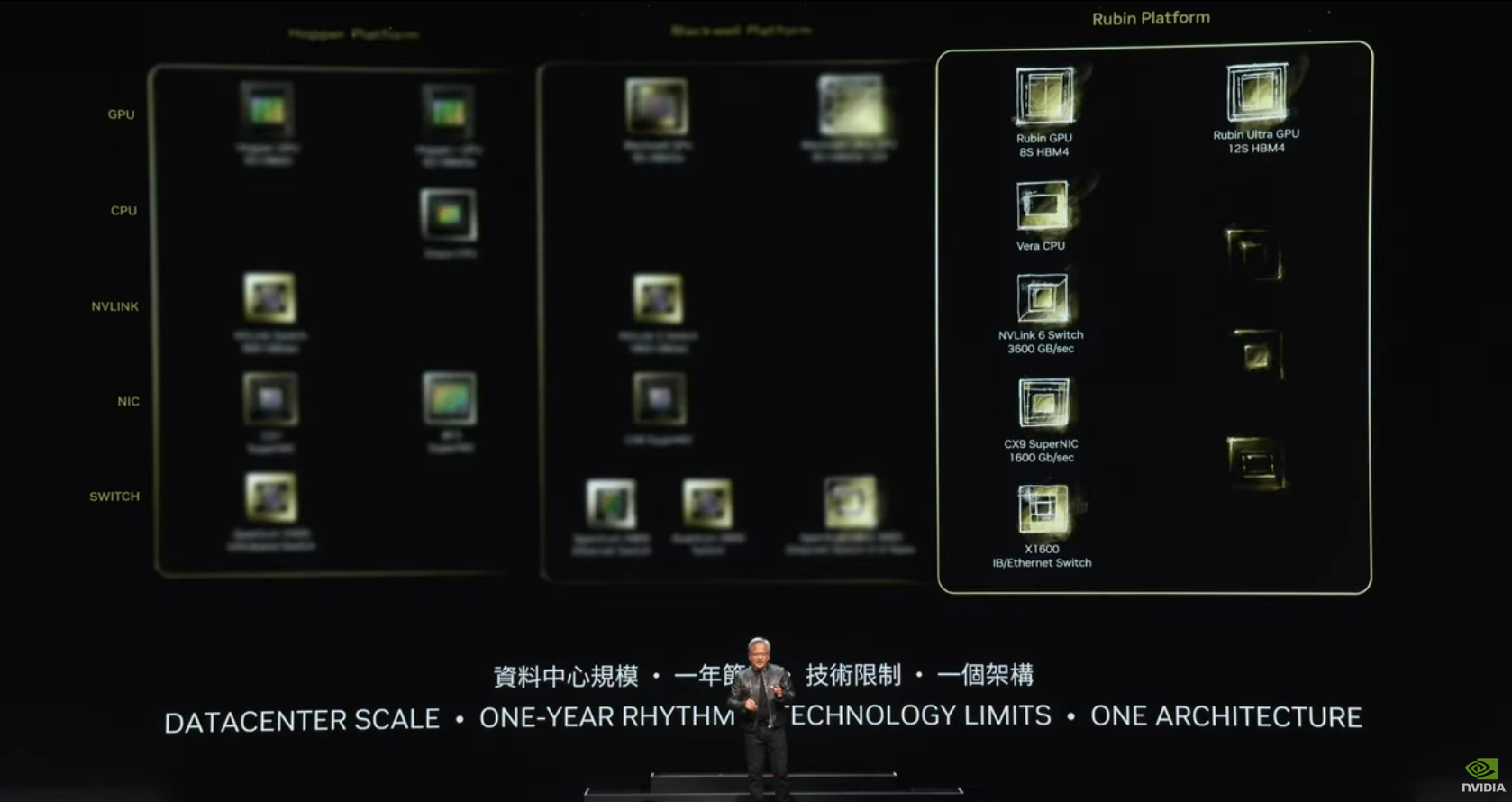

The Rubin AI platform, expected in 2026, will use HBM4 (a new form of high-bandwidth memory) and NVLink 6 Switch, operating at 3,600GBps. Following that launch, Nvidia will release a tick-tock iteration called "Rubin Ultra." While Huang did not provide extensive specifications for the upcoming products, he promised cost and energy savings related to the new chipsets.

During the keynote, Huang also introduced a new ARM-based CPU called "Vera," which will be featured on a new accelerator board called "Vera Rubin," alongside one of the Rubin GPUs.

Much like Nvidia's Grace Hopper architecture, which combines a "Grace" CPU and a "Hopper" GPU to pay tribute to the pioneering computer scientist of the same name, Vera Rubin refers to Vera Florence Cooper Rubin (1928–2016), an American astronomer who made discoveries in the field of deep space astronomy. She is best known for her pioneering work on galaxy rotation rates, which provided strong evidence for the existence of dark matter.

Loading comments...

Loading comments...

Anyone who wants to stay in the game and be a significant AI player just doesn't seem to have another choice but buy what Nvidia has currently with the stickiness and all the action happening in CUDA libraries with massive prebuilt libraries and armies of ML developers. Even the AMD option being 10K vs 30-35K doesn't seem to change Nvidia being the one with quasi-infinite demand despite there being a 3.5x cheaper option, it's largely in the software ecosystem though the hardware is also very good. So I can see his confidence in mentioning this.

That said, I've seen the bullwhip effect many times now, long term AI will be huge, but there's always a chance we've gotten frothy short term. I'd never bet against Jen-Hsun long term, probably one of the greatest executions in CEOs right now, and he seems to be able to do it without shitposting on X.