On Thursday, Google capped off a rough week of providing inaccurate and sometimes dangerous answers through its experimental AI Overview feature by authoring a follow-up blog post titled, "AI Overviews: About last week." In the post, attributed to Google VP Liz Reid, head of Google Search, the firm formally acknowledged issues with the feature and outlined steps taken to improve a system that appears flawed by design, even if it doesn't realize it is admitting it.

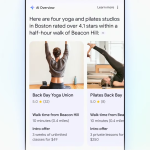

To recap, the AI Overview feature—which the company showed off at Google I/O a few weeks ago—aims to provide search users with summarized answers to questions by using an AI model integrated with Google's web ranking systems. Right now, it's an experimental feature that is not active for everyone, but when a participating user searches for a topic, they might see an AI-generated answer at the top of the results, pulled from highly ranked web content and summarized by an AI model.

While Google claims this approach is "highly effective" and on par with its Featured Snippets in terms of accuracy, the past week has seen numerous examples of the AI system generating bizarre, incorrect, or even potentially harmful responses, as we detailed in a recent feature where Ars reporter Kyle Orland replicated many of the unusual outputs.

Drawing inaccurate conclusions from the web

Given the circulating AI Overview examples, Google almost apologizes in the post and says, "We hold ourselves to a high standard, as do our users, so we expect and appreciate the feedback, and take it seriously." But Reid, in an attempt to justify the errors, then goes into some very revealing detail about why AI Overviews provides erroneous information:

AI Overviews work very differently than chatbots and other LLM products that people may have tried out. They’re not simply generating an output based on training data. While AI Overviews are powered by a customized language model, the model is integrated with our core web ranking systems and designed to carry out traditional “search” tasks, like identifying relevant, high-quality results from our index. That’s why AI Overviews don’t just provide text output, but include relevant links so people can explore further. Because accuracy is paramount in Search, AI Overviews are built to only show information that is backed up by top web results.

This means that AI Overviews generally don't “hallucinate” or make things up in the ways that other LLM products might.

Here we see the fundamental flaw of the system: "AI Overviews are built to only show information that is backed up by top web results." The design is based on the false assumption that Google's page-ranking algorithm favors accurate results and not SEO-gamed garbage. Google Search has been broken for some time, and now the company is relying on those gamed and spam-filled results to feed its new AI model.

Loading comments...

Loading comments...

Where does that prose come from, if it's not understanding what it's writing about? It's doing text summarization, which in this case seems to be driven by two things: (1) the words in the texts it's summarizing, and (2) the statistical(-like) models of what words typically follow what other words in English. The result is that it pulls some words out of the texts it's summarizing, and then glues them together in a way that makes good sensible English. The problem is that it misses a lot when it summarizes.

The screenshot of the video console summary illustrates this (although of course it's impossible to know whether these were the exact input that generated the output). It picked up the fact that the Atari Jaguar was released in 1993, but when it generated the summary, it didn't understand that the other two consoles came later.

I'm really not seeing why this should be considered an acceptable thing. Why should we have to accept things that are flat out broken, especially when Google had something that worked, and worked very well, not 10 years ago? Their old, normal search might have had this come up, but it would be presented in context, and people would realize it's a silly goof. And it would have been like the eighth result on the page, below real, actual results.

Quite frankly, I'm really sick and tired of MBAs and finance assholes ruining everything that's good.

To most people, tired look at you quizzically and ask, “Can they just take the boat?” Their only confusion is that the answer seems so obvious.

Here’s ChatGPT’s answer:

I mentioned a goat, a man, and crossing a river. The AI gets my question confused with the goat, cabbage, wolf riddle, so it starts using that to autocorrect an answer.

My son has figured out dozens of these types of examples. He also says the AIs have no concept of knowledge or truth. They will blindly generate a wrong answer just as quickly as a correct answer.

There might be a place for AIs, but he believes LLM are far from the answer. Google would be better off using an AI to filter out optimized SEO crap from their search results using pattern recognition than giving people answers Google itself has no idea if they’re any good.