CUPERTINO, Calif.—Going into the Vision Pro demo room at Apple’s WWDC conference, I wasn’t sure what to expect. The keynote presentation, which showed everything from desktop productivity apps to dinosaurs circling a Vision Pro user in space, seemed impressive, but augmented reality promotional videos often do.

They depict a seamless experience in which the elements of digital space merge with the user’s actual surroundings completely. When you actually put on the headset, though, you'll often find that the promotional video was pure aspiration and reality still has some catching up to do. That was my experience with HoloLens, and it has been that way with consumer AR devices like Nreal, too.

That was not my experience with Vision Pro. To be clear, it wasn’t perfect. But it’s the first time I’ve tried an AR demo and thought, “Yep, what they showed in the promo video was pretty much how it really works.”

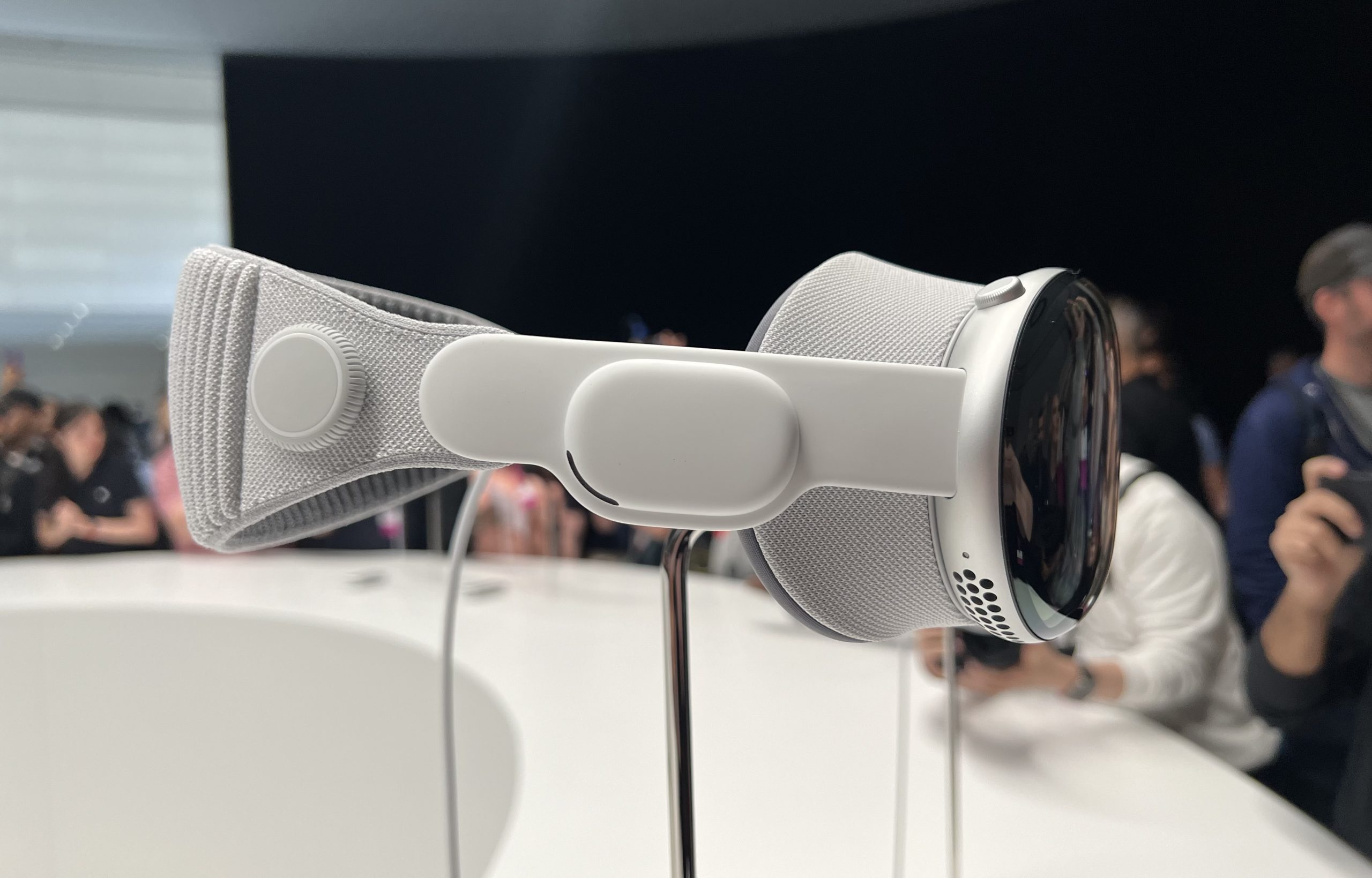

(Quick note: Apple wouldn’t allow photos of me wearing the headset—or any other photos during the demo, for that matter. The photos in this article are of a headset put on display after Monday’s keynote.)

Getting set up

Before I was able to put on Vision Pro and try it, Apple gathered some information about my vision—specifically, that I was wearing contact lenses and that I'm nearsighted but not farsighted. This was to see if I needed corrective vision inserts, as glasses would not fit in the headset. Since I was wearing contacts, I didn’t.

An Apple rep also handed me an iPhone, which I used to scan my face with the TrueDepth sensor array. This was to create a virtual avatar, called a "persona," for FaceTime calls (more on that shortly) and to pick the right modular components for the headset to make sure it fit my head.

Loading comments...

Loading comments...