Electricity supply is becoming the latest chokepoint to threaten the growth of artificial intelligence, according to leading tech industry chiefs, as power-hungry data centers add to the strain on grids around the world.

Billionaire Elon Musk said this month that while the development of AI had been “chip constrained” last year, the latest bottleneck to the cutting-edge technology was “electricity supply.” Those comments followed a warning by Amazon chief Andy Jassy this year that there was “not enough energy right now” to run new generative AI services.

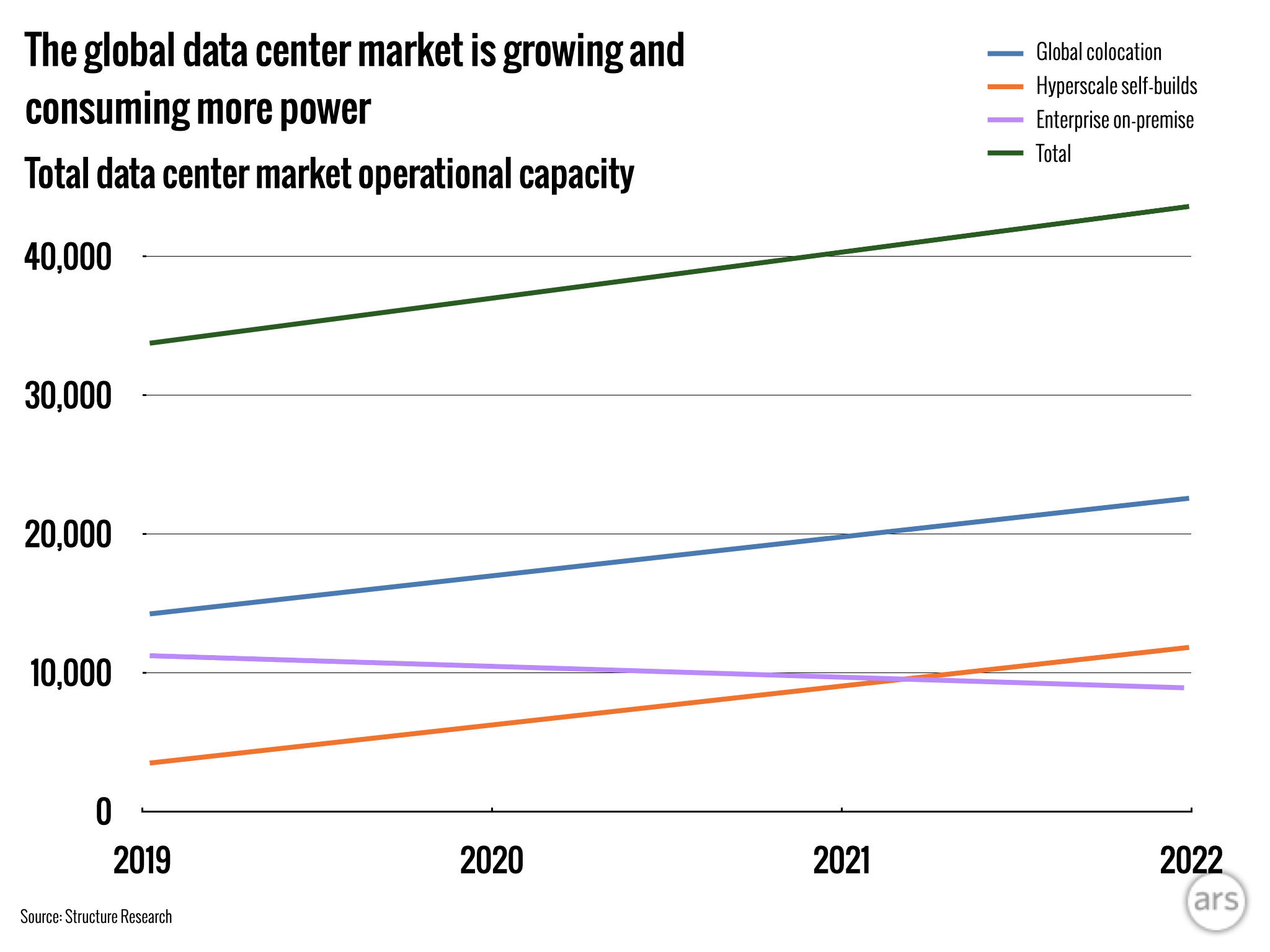

Amazon, Microsoft, and Google parent Alphabet are investing billions of dollars in computing infrastructure as they seek to build out their AI capabilities, including in data centers that typically take several years to plan and construct.

But some of the most popular places for building the facilities, such as northern Virginia, are facing capacity constraints which, in turn, are driving a search for suitable sites in growing data center markets globally.

“Demand for data centers has always been there, but it’s never been like this,” said Pankaj Sharma, executive vice president at Schneider Electric’s data center division.

At present, “we probably don’t have enough capacity available” to run all the facilities that will be required globally by 2030, said Sharma, whose unit is working with chipmaker Nvidia to design centers optimized for AI workloads.

“One of the limitations of deploying [chips] in the new AI economy is going to be ... where do we build the data centers and how do we get the power,” said Daniel Golding, chief technology officer at Appleby Strategy Group and a former data center executive at Google. “At some point the reality of the [electricity] grid is going to get in the way of AI.”

Loading comments...

Loading comments...