📌 This is an official PyTorch implementation of [ECCV 2022] - TinyViT: Fast Pretraining Distillation for Small Vision Transformers.

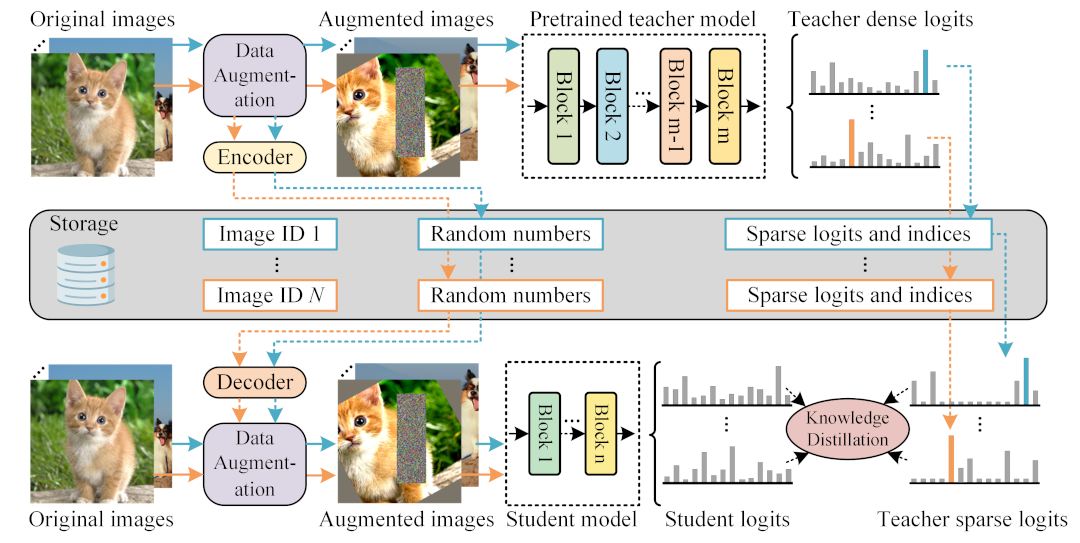

TinyViT is a new family of tiny and efficient vision transformers pretrained on large-scale datasets with our proposed fast distillation framework. The central idea is to transfer knowledge from large pretrained models to small ones. The logits of large teacher models are sparsified and stored in disk in advance to save the memory cost and computation overheads.

🚀 TinyViT with only 21M parameters achieves 84.8% top-1 accuracy on ImageNet-1k, and 86.5% accuracy under 512x512 resolutions.

☀️ Hiring research interns for neural architecture search, tiny transformer design, model compression projects: [email protected].

- TinyViT-21M

on IN-22k achieves 84.8% top-1 accuracy on IN-1k, and 86.5% accuracy under 512x512 resolutions.

on IN-22k achieves 84.8% top-1 accuracy on IN-1k, and 86.5% accuracy under 512x512 resolutions. - TinyViT-21M trained from scratch on IN-1k without distillation achieves 83.1 top-1 accuracy, under 4.3 GFLOPs and 1,571 images/s throughput on V100 GPU.

- TinyViT-5M

reaches 80.7% top-1 accuracy on IN-1k under 3,060 images/s throughput.

reaches 80.7% top-1 accuracy on IN-1k under 3,060 images/s throughput. - Save teacher logits once, and reuse the saved sparse logits to distill arbitrary students without overhead of teacher model. It takes 16 GB / 481 GB storage space for IN-1k (300 epochs) and IN-22k (90 epochs), respectively.

-

Efficient Distillation. The teacher logits can be saved in parallel and reused for arbitrary student models, to avoid re-forwarding cost of the large teacher model.

-

Reproducibility. We provide the hyper-parameters of IN-1k training, IN-22k pre-training with distillation, IN-22kto1k fine-tuning, and higher resolution fine-tuning. In addition, all training logs are public (in Model Zoo).

-

Ease of Use. One file to build a TinyViT model. The file

models/tiny_vit.pydefines TinyViT model family.from tiny_vit import tiny_vit_21m_224 model = tiny_vit_21m_224(pretrained=True) output = model(image)

An inference script:

inference.py. -

Extensibility. Add custom dataset, student and teacher models with no need to modify your code. The class

DatasetWrapperwraps the general dataset to support saving and loading sparse logits. It only need the logits of models for knowledge distillation. -

Public teacher model. We provide CLIP-ViT-Large/16-22k, a powerful teacher model on pretraining distillation (Acc@1 85.894 Acc@5 97.566 on IN-1k). We finetuned CLIP-ViT-Large/16 released by OpenAI on IN-22k.

-

Online Logging. Support wandb for checking the results anytime anywhere.

| Model | Pretrain | Input | Acc@1 | Acc@5 | #Params | MACs | FPS | 22k Model | 1k Model |

|---|---|---|---|---|---|---|---|---|---|

TinyViT-5M  |

IN-22k | 224x224 | 80.7 | 95.6 | 5.4M | 1.3G | 3,060 | link/config/log | link/config/log |

TinyViT-11M  |

IN-22k | 224x224 | 83.2 | 96.5 | 11M | 2.0G | 2,468 | link/config/log | link/config/log |

TinyViT-21M  |

IN-22k | 224x224 | 84.8 | 97.3 | 21M | 4.3G | 1,571 | link/config/log | link/config/log |

TinyViT-21M-384  |

IN-22k | 384x384 | 86.2 | 97.8 | 21M | 13.8G | 394 | - | link/config/log |

TinyViT-21M-512  |

IN-22k | 512x512 | 86.5 | 97.9 | 21M | 27.0G | 167 | - | link/config/log |

| TinyViT-5M | IN-1k | 224x224 | 79.1 | 94.8 | 5.4M | 1.3G | 3,060 | - | link/config/log |

| TinyViT-11M | IN-1k | 224x224 | 81.5 | 95.8 | 11M | 2.0G | 2,468 | - | link/config/log |

| TinyViT-21M | IN-1k | 224x224 | 83.1 | 96.5 | 21M | 4.3G | 1,571 | - | link/config/log |

ImageNet-22k (IN-22k) is the same as ImageNet-21k (IN-21k), where the number of classes is 21,841.

The models with  are pretrained on ImageNet-22k with the distillation of CLIP-ViT-L/14-22k, then finetuned on ImageNet-1k.

are pretrained on ImageNet-22k with the distillation of CLIP-ViT-L/14-22k, then finetuned on ImageNet-1k.

We finetune the 1k models on IN-1k to higher resolution progressively (224 -> 384 -> 512) [detail], without any IN-1k knowledge distillation.

🔰 Here is the setup tutorial and evaluation scripts.

🔰 For the proposed fast pretraining distillation, we need to save teacher sparse logits firstly, then pretrain a model.

If this repo is helpful for you, please consider to cite it. 📣 Thank you! :)

@InProceedings{tiny_vit,

title={TinyViT: Fast Pretraining Distillation for Small Vision Transformers},

author={Wu, Kan and Zhang, Jinnian and Peng, Houwen and Liu, Mengchen and Xiao, Bin and Fu, Jianlong and Yuan, Lu},

booktitle={European conference on computer vision (ECCV)},

year={2022}

}🎉 We would like to acknowledge the following research work that cites and utilizes our method:

MobileSAM (Faster Segment Anything: Towards Lightweight SAM for Mobile Applications) [bib]

@article{zhang2023faster,

title={Faster Segment Anything: Towards Lightweight SAM for Mobile Applications},

author={Zhang, Chaoning and Han, Dongshen and Qiao, Yu and Kim, Jung Uk and Bae, Sung Ho and Lee, Seungkyu and Hong, Choong Seon},

journal={arXiv preprint arXiv:2306.14289},

year={2023}

}Our code is based on Swin Transformer, LeViT, pytorch-image-models, CLIP and PyTorch. Thank contributors for their awesome contribution!